- publication

- ACM Transactions on Graphics

- authors

- Olga Diamanti, Connelly Barnes, Sylvain Paris, Eli Shechtman, Olga Sorkine-Hornung

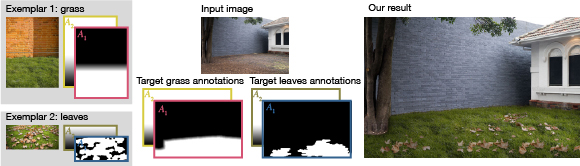

Replacing the dirt in an image by a lawn covered with leaves. The grass and leaves exemplars are annotated to indicate the grass region and the scale of the grass. The user specifies the desired annotation values for the target image, and our algorithm synthesizes the result. Standard texture representation would fail to handle the intricate occlusions in such an example, or would introduce unsightly repetitions, while capturing a BTF of a size on the order of a lawn is infeasible with existing techniques. Our approach produces plausible results for materials with complex appearance using only images downloaded from the Internet and minimal user input.

abstract

Editing materials in photos opens up numerous opportunities like turning an unappealing dirt ground into luscious grass and creating a comfortable wool sweater in place of a cheap t-shirt. However, such edits are challenging. Approaches such as 3D rendering and BTF rendering can represent virtually everything, but they are also data intensive and computationally expensive, which makes user interaction difficult. Leaner methods such as texture synthesis are more easily controllable by artists, but also more limited in the range of materials that they handle, for example, grass and wool are typically problematic because of their non-Lambertian reflectance and numerous self-occlusions. We propose a new approach for editing of complex materials in photographs. We extend the texture-by-numbers approach with ideas from texture interpolation. The inputs to our method are coarse user annotation maps that specify the desired output, such as the local scale of the material and the illumination direction. Our algorithm then synthesizes the output from a discrete set of annotated exemplars. A key component of our method is that it can cope with missing data, interpolating information from the available exemplars when needed. This enables production of satisfying results involving materials with complex appearance variations such as foliage, carpet, and fabric from only one or a couple of exemplar photographs.

downloads

- Paper (ACM Transactions on Graphics, official version available at http://dl.acm.org/)

- BibTex entry

acknowledgments

We are grateful to Jim McCann for various discussions and idea suggestions on interactivity. We are also grateful to Wenzel Jakob for the introduction to Mitsuba. We thank our im- age sources: Flickr users Anup Jaiswal (Anup_Nikon D40), Glen Scott (hey mr glen), Mark Walsh (4mtr), Lindsay Buckley (lindsaypunk), Robert Moore (Brron), and the websites www. istockphoto.com, www.molon.de, graphicleftovers.com, and www.publicdomainpictures.net. This project was funded in part by the ERC Starting Grant iModel (StG-2012-306877).