- publication

- ACM SIGGRAPH 2019

- demo

- Emerging Technologies at SIGGRAPH 2019

- authors

- Oliver Glauser, Shihao Wu, Daniele Panozzo, Otmar Hilliges, Olga Sorkine-Hornung

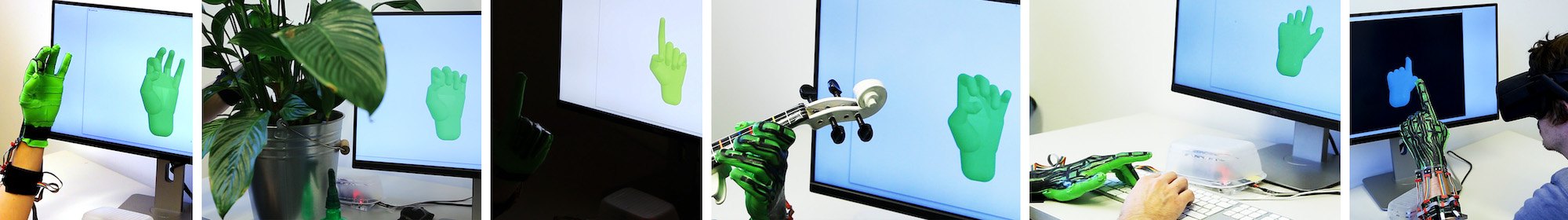

Our stretch-sensing soft glove captures hand poses in real time and with high accuracy. It functions in diverse and challenging settings, like heavily occluded environments or changing light conditions, and lends itself to various applications. All images shown here are frames from recorded live sessions.

abstract

We propose a stretch-sensing soft glove to interactively capture hand poses with high accuracy and without requiring an external optical setup. We demonstrate how our device can be fabricated and calibrated at low cost, using simple tools available in most fabrication labs. To reconstruct the pose from the capacitive sensors embedded in the glove, we propose a deep network architecture that exploits the spatial layout of the sensor itself. The network is trained only once, using an inexpensive off-the-shelf hand pose reconstruction system to gather the training data. The per-user calibration is then performed on-the-fly using only the glove. The glove’s capabilities are demonstrated in a series of ablative experiments, exploring different models and calibration methods. Comparing against commercial data gloves, we achieve a 35% improvement in reconstruction accuracy.

downloads

- Paper (ACM SIGGRAPH 2019, official version available at http://portal.acm.org/)

- Video

- Dataset

- BibTex entry

accompanying video

acknowledgments

We would like to thank Severin Klingler, Christian Schüller, Velko Vechev and Katja Wolff for insightful discussions, John Sivell for narrating the video and all the participants in the data set collection. This work was supported in part by the SNF grant 200021_162958, the NSF CAREER award IIS-1652515, the NSF grant OAC:1835712, GPU donations from the NVIDIA Corporation and a gift from Adobe.